Domain + Hosting + Functional Website

I have a business and need a free website.

- Never worry about paying for hosting and domain renewal fees ever again.

specialized in building free websites for small businesses .

So G16framework eliminates

the need for...

Website Design Cost

Say goodbye to exorbitant expenses associated with website design and developmentDomain & Hosting Cost

We take care of hosting and domain server and SSL certificate renewal costs.Maintenance Bill

Maintaining a website can be a daunting task, often requiring substantial financial resources.Website Audit & SEO

Visibility plays a crucial role in the success of any website. G16framework recognizes thisSmall Business Owners Feedbacks

Customers trust us to keep their websites operating at its best for less.

G16framework delivered outstanding results for our custom website design project. They understood our vision created a unique and user-friendly website in less than 2 weeks

Elizabeth B

Founder, Wellness Transformative

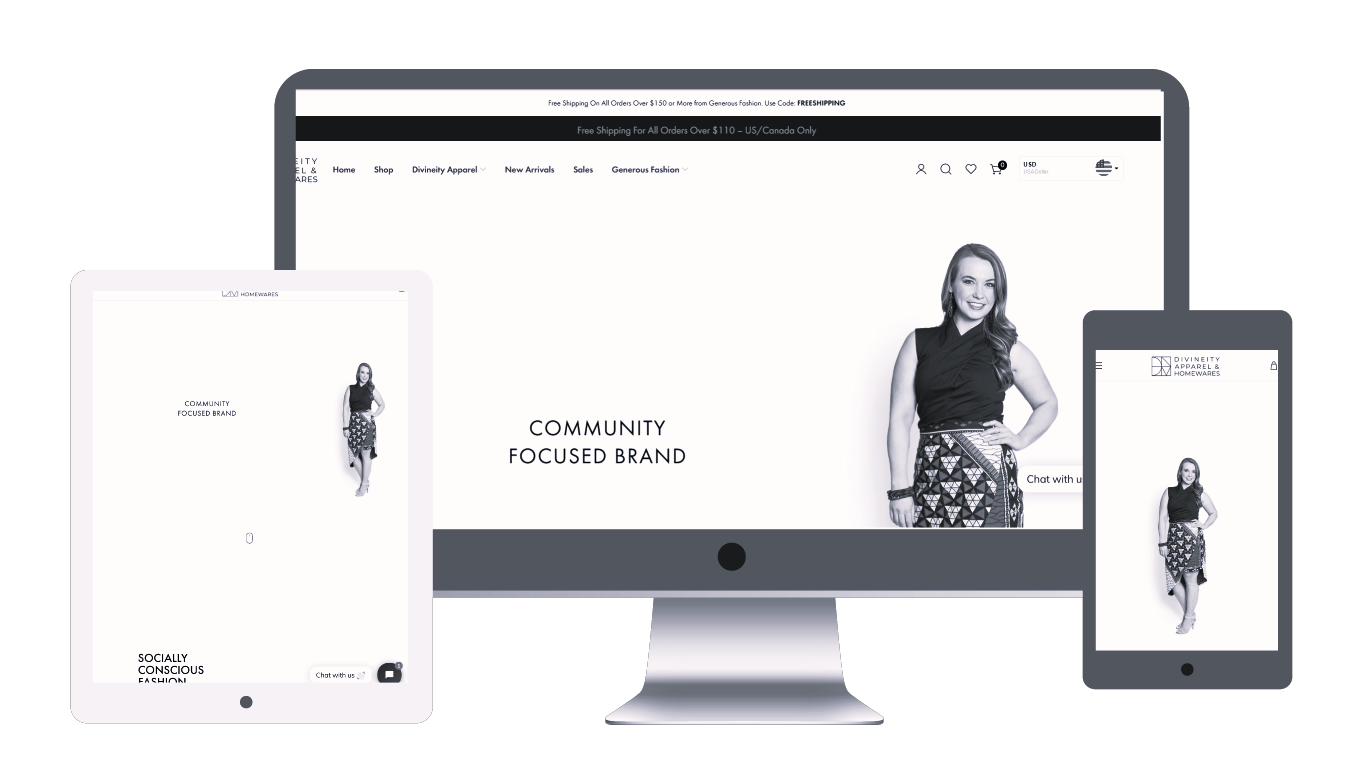

GMP exceeded expectations with the design of our African clothing online store, delivering a tailored website that met all the specified criteria and was finished ahead of schedule. Thumbs up.

Ajibola A

DivineityFashion

GMP developed a personalized website for our beauty salon that streamlined the appointment booking process. The project was finalized in November 2023. Thank you.

Sowemimo

Asiri Beauty Salon

G16framework Media Production excelled in creating our classified listing website, bandylist.com. They finished the project early and the website surpassed our expectations. Trusted and reliable.

Jenny R.

Bandylist ClassifiedSmall Businesses On GMP Network

How It Works In

3-steps

to get you on board

Sign up

Fill up the subscription

Get your subscription approved

Account is reviewed and approved.

You work with site manager

After approval, GMP will assign you a dedicated website manager who will work closely with you

decide on which pricing plan best for you.

free plan

Generated Domain + HostingGreat for small business owners who are trying out the platform and are not yet ready to go live.

- One page website

- Website builder

- Business email

- Unique Domain name

- Website redesign

- Website manager support

- On-going SEO

- AI powered website

- website audit

- free Website migration

- Pre-installed templates

- free Domain transfer

- Plesk cPanel

starter Plan

Unique Domain + HostingA great plan for existing business owners who have already tried our website services.

- 1 - page website

- Website builder

- Business email

- Unique Domain name

- Website redesign

- Website manager support

- On-going SEO

- AI powered website

- Website audit

- free Website migration

- Pre-installed templates

- free Domain transfer

- Plesk cPanel

Boss Plan

Unique Domain + Hosting + AIAn exceptional choice for ambitious business owners looking to elevate their brand.

- 5 - page website

- Website builder

- Business email

- Unique Domain name

- Website redesign

- Website manager support

- On-going SEO

- AI powered website

- Website audit

- free Website migration

- Pre-installed templates

- free Domain transfer

- Plesk cPanel

Free Websites

Frequently

asked questions

what comes with free plan

The free plan offers several features to help small business owners get started with their online presence. It includes a basic website builder with customizable templates, domain hosting, and limited storage space. However, do keep in mind that some advanced features and functionalities may not be available in the free websites plan and that includes a “unique domain”

can i upgrade free to premium plan

You have the option to upgrade from the free plan to a premium plan in order to access additional features and benefits. With the upgrade, you can utilize premium templates, increase storage space, create professional email addresses, access advanced analytics, enable e-commerce capabilities, and more. This upgrade is an excellent opportunity to enhance and expand your online presence as your business progresses.

what is website manager?

The website manager is a dedicated team of tech support assigned to you when you subscribe to our premium plan. They provide small business owners with all the necessary assistance to efficiently manage and control their website. Typically, they offer guidance on how to utilize user-friendly features, such as drag-and-drop editors, customizable layouts, content management systems, and the ability to effortlessly add or modify elements on your free websites. The website manager also simplifies the task of maintaining, updating, and optimizing your website, making it an indispensable for small businesses.

how does payment work?

The available payment options can differ based on the plan or service selected for your small business website. Typically, GMP utilizes a subscription-based payment system. Here, you can choose a plan and make monthly or annual payments. Moreso, you have the flexibility to cancel at any time without incurring any charges.

what is a Technical domain?

The technical domain refers to domains that are hosted on our server but not actually purchased. These domains can be utilized to test our services and remain in operation even with free websites. The key distinction is that the character combination in the generated domain name cannot be altered. However, the positive aspect is that you have the option to switch or transfer all your free website files and designs from an existing generated domain on a free plan to a distinct domain without losing any content. This transition is smooth and effortless, and your website manager handles everything required.